This guide walks through adding a new Model Provider and making its models available across the platform. It focuses on the OpenAI Compatible path because it is the most flexible. The same steps apply to OpenAI, Anthropic, Azure AI Foundry, and Vertex AI with minor field-name differences.

For background on what Model Providers and Model Defaults are, see AI Inference.

Gather the following from the provider you plan to connect:

base_url).gpt-4o-mini or llama-3.3-70b-instruct). These must match what the endpoint accepts.You need admin access to the Administration app on Method.

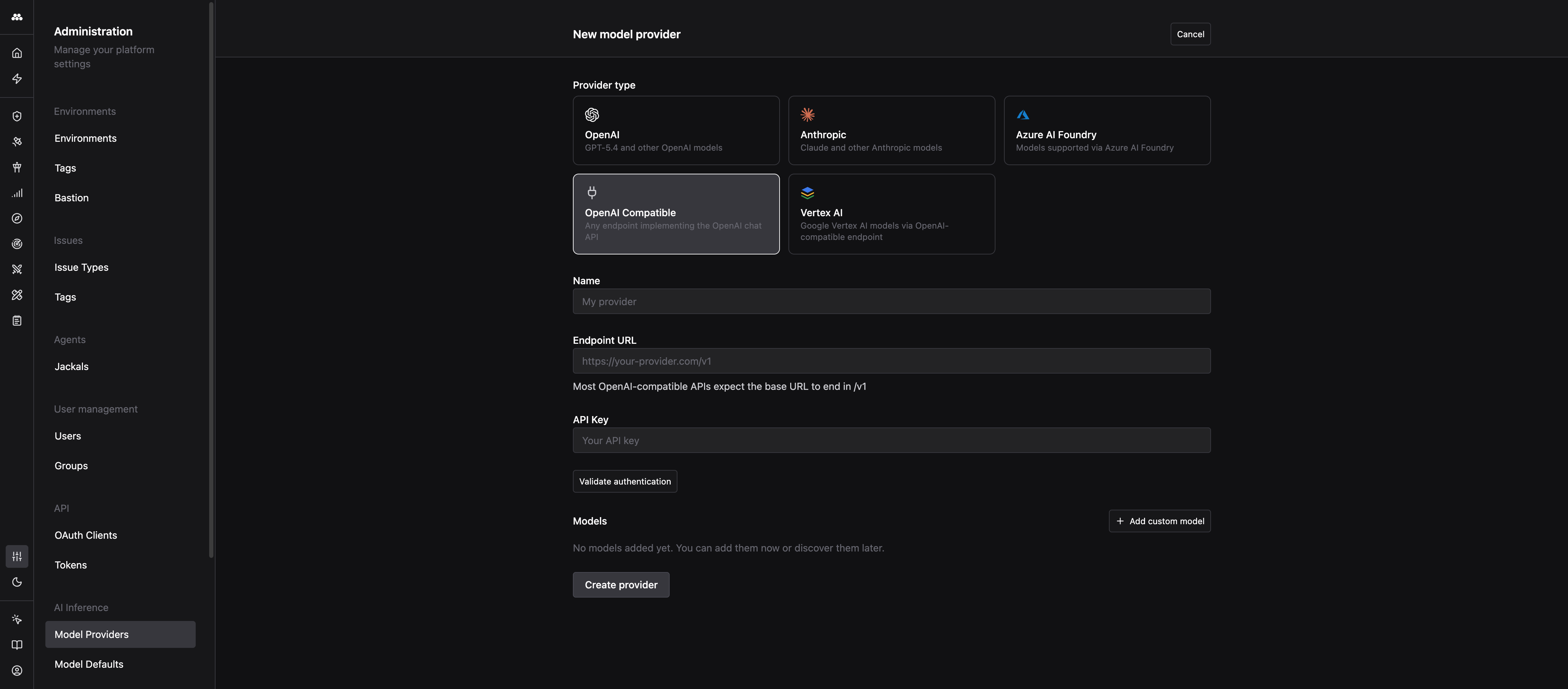

Navigate to Admin > Model Providers, then click the + icon next to “Model providers” in the left column.

Select the tile that matches your endpoint:

The rest of this guide uses OpenAI Compatible. Other types have the same core fields with minor naming differences.

Enter a recognizable name in the Name field (for example, Internal LLM Gateway). The name appears everywhere a user picks a model, so make it unambiguous.

Paste the base URL into Endpoint URL.

Most OpenAI-compatible APIs expect the base URL to end in /v1. If your provider documents a URL like https://example.com/api, the correct value here is usually https://example.com/api/v1. The form shows this as helper text under the field. If you are unsure, do not guess: save the URL you have, then use Validate authentication in the next step. A wrong path is the most common cause of a 404 on the first call.

Examples:

https://api.groq.com/openai/v1https://api.together.xyz/v1https://llm-gateway.internal.example.com/v1Paste the credential into API Key.

Click Validate authentication. Method sends a small request to the endpoint using the credential you provided.

/v1), the key (wrong value or missing permissions), and the network path (the endpoint must be reachable from Method).Always validate before you save. It takes two seconds and saves you from chasing errors from downstream Agents later.

Click Add custom model to add a row. For each model, fill in:

model field of every API call. A typo here causes the model to fail even though the provider connection is healthy.CHAT for conversational models, EMBEDDING for embedding models.Expand Advanced settings on the row to set Context window (tokens) and Default max tokens if your model has non-standard limits. Leave them blank for Method to use sensible defaults.

Repeat for every model you want to expose. You can always add, rename, or disable models later.

Click Create provider. The provider appears in the left column with an “Enabled” badge and is immediately selectable across the platform.

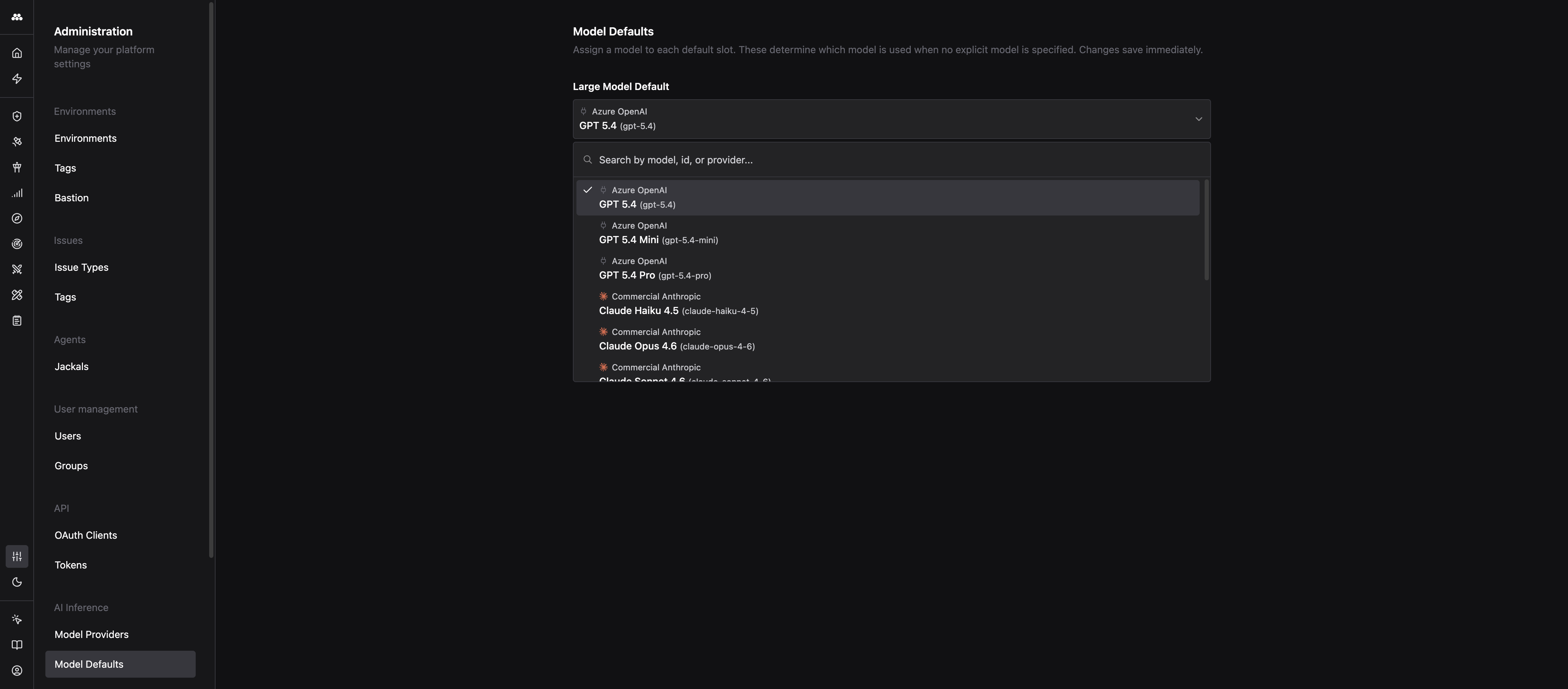

If you want Agents and Operator to use these models by default, go to Admin > Model Defaults and pick one of the new models for the Large, Medium, and Small slots.

Changes save immediately. Every Agent or Task that asks for a size class starts using the new model on the next call. No redeploy required.

/v1: the most common cause of 404s on the first request. Fix by appending /v1 to the URL and revalidating.For the reference that describes each field and how Model Defaults are resolved, see AI Inference.